All the ways you can self-host LLMs

From laptops to clusters, here's a practical guide to self-hosting LLMs.

There’s a reason self-hosting keeps coming up in conversations around LLMs lately. Running your own LLM unlocks things the big hosted APIs can’t: total data control and privacy, offline inference, custom fine-tuning, and costs that don’t burn a hole in your wallet.

If you're here from feeling the swirl of “too many options” out there, in this guide, we’ll go through:

-

Every major self-hosting approach; from running models in your basement on your laptop to managing servers and clusters.

-

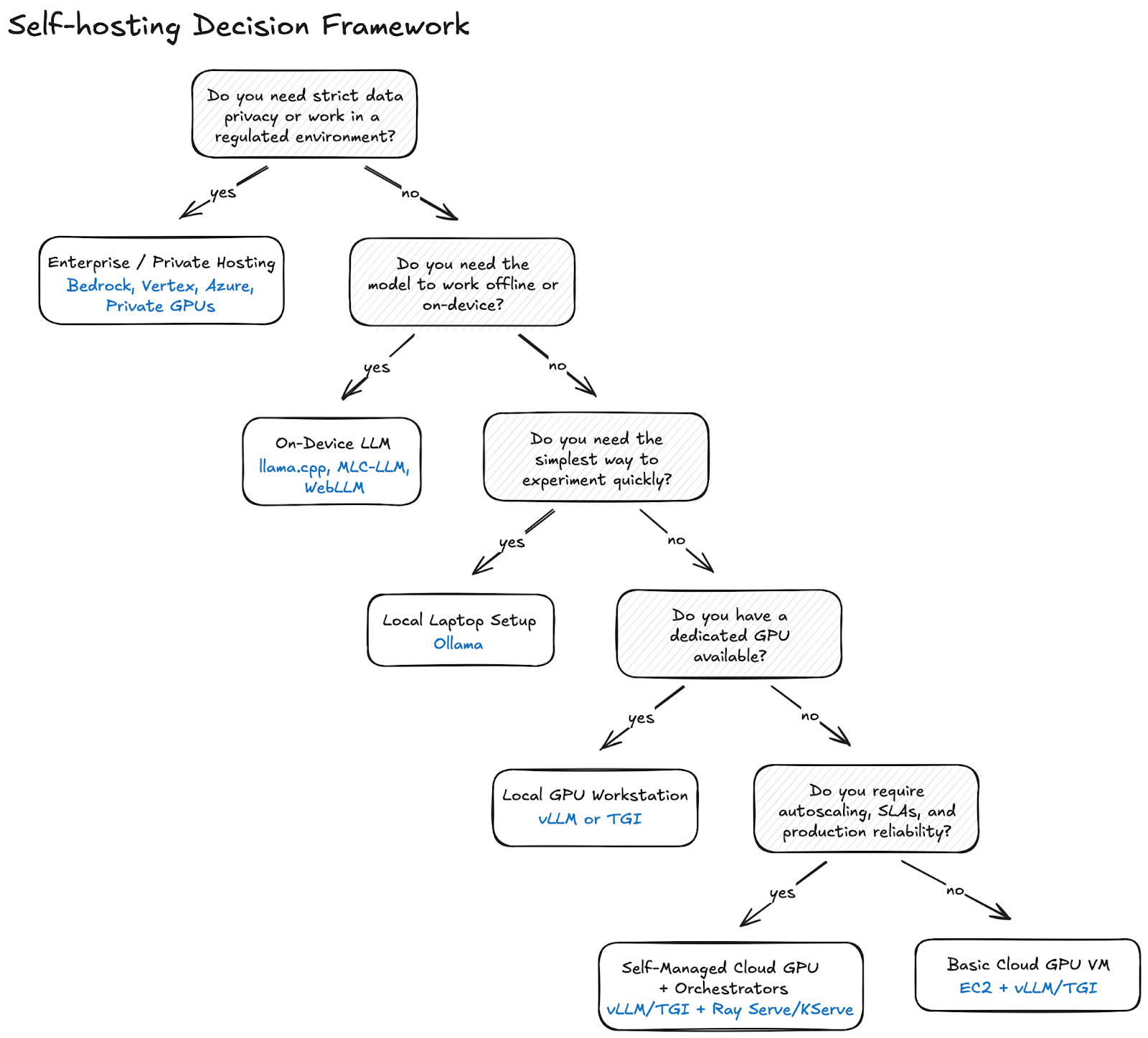

A simple decision framework to pick the right option for your needs.

The vocabulary you should know

LLM terminology escalates fast and can feel overwhelming if you're just getting started. Let’s break down the most common concepts behind modern LLMs in simple, human language, that you will read through the remainder of this blog:

| Term | Definition |

|---|---|

| Acceleration | Techniques or hardware that speed up inference. This includes GPUs, special chips, optimized libraries, and tricks that make models respond quicker. |

| Inference | When an AI model predicts or responds. |

| Instance | A single running copy of the LLM. |

| Nodes | Computing machines used to run models. |

| Orchestration | The process of coordinating all the moving parts; models, nodes, scaling, routing requests; to ensure everything works as expected. |

| Parameters | Tiny, learned values inside an AI model that shape how it understands language, makes decisions, and generates responses. For example, when someone says "a 7B model," it means the model has 7 billion parameters, 7 billion tiny dials that collectively determine how it behaves. |

| Quantization | Shrinking the model's numbers to make it lighter and faster. |

Now that we speak the same language;

Why do LLMs need special hardware?

While LLMs can and do run on normal hardware, they run much faster and more efficiently on specialized hardware because of the nature of their computation.

Simply put, they are essentially giant stacks of mathematical operations that scale with billions or trillions of parameters. All modern LLMs (from OpenAI, Google, Anthropic, Mistral, and so on) carry out operations like matrix multiplications, attention operations, and vector dot products to perform tasks like projection, translation, and transformation.

You could very much run your models over metal (or a MacBook for simplification) or even Raspberry Pis with low-bit quantization, but you’d quickly feel the pain as you’d be shrinking parameters and compromising on parallelism and bandwidth to do so.

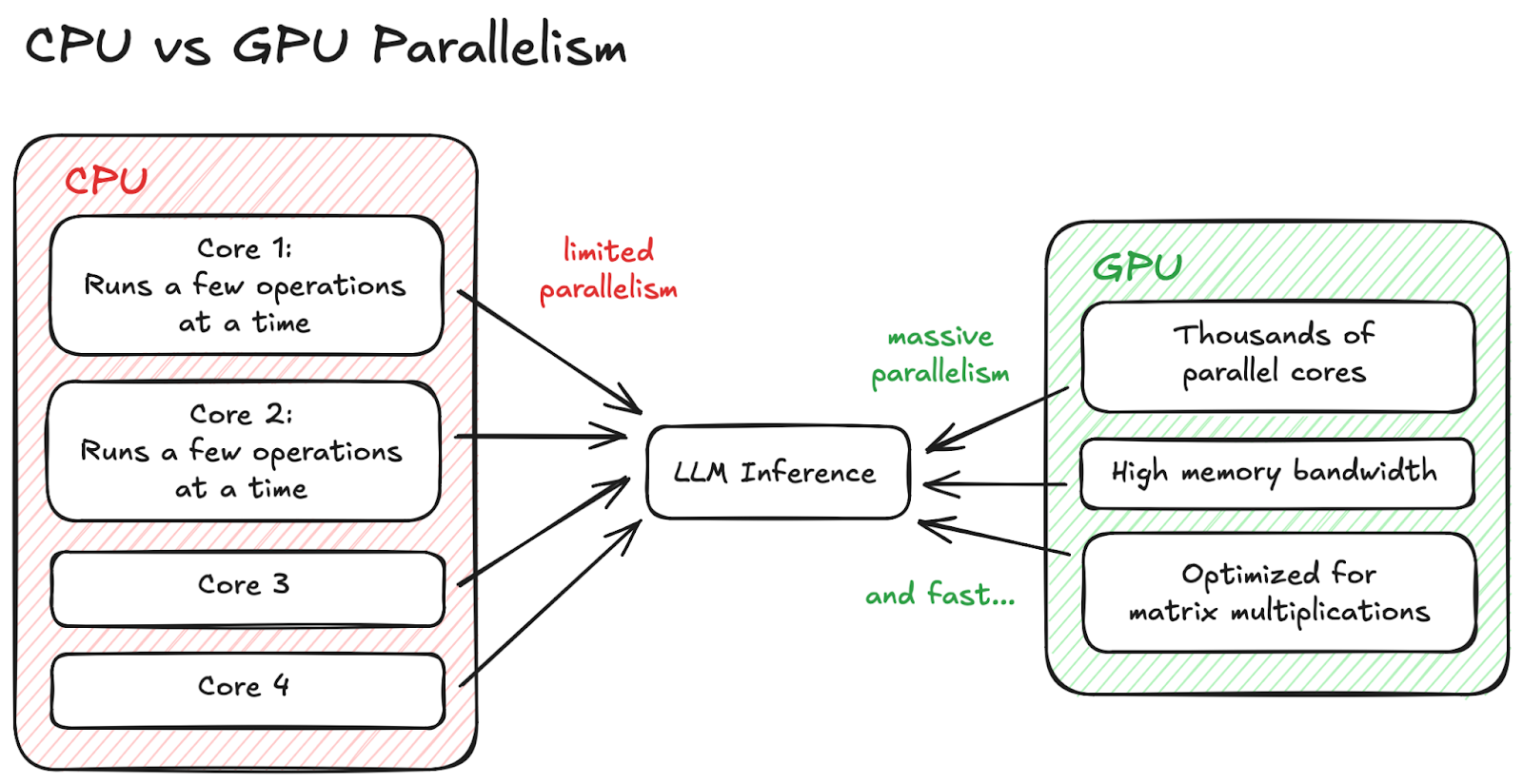

CPU vs GPU Parallelism

CPU vs GPU Parallelism

In the case of a Raspberry Pi, the CPU can only run a few operations at once, fit for really small models; however, if you opt for a GPU, it is capable of running thousands of math operations simultaneously, which reinforces the importance of opting for specialized hardware.

Should you self-host your LLM?

Before we dive into implementation options, let’s run a quick sanity check:

Key Considerations

-

Do you need strict data privacy?

-

Do you need predictable, controllable latency?

-

Do you need offline or air-gapped inference?

-

Do you need to fine-tune or customize the model deeply?

-

Do you want to avoid per-token cloud pricing at scale?

-

Are you okay owning the operational burden?

If reading those made you think, ‘yeah, I do need that,’ then self-hosting is likely a good fit.

Exploring self-hosting solutions

1. On a local machine

The easiest way to run LLMs locally is with Ollama. It abstracts away all the hard parts like pulling model weights, managing quantization, running inference servers and the best part is, you can run it with a single command line just like ngrok, on platforms like MacOS, Windows, and Linux.

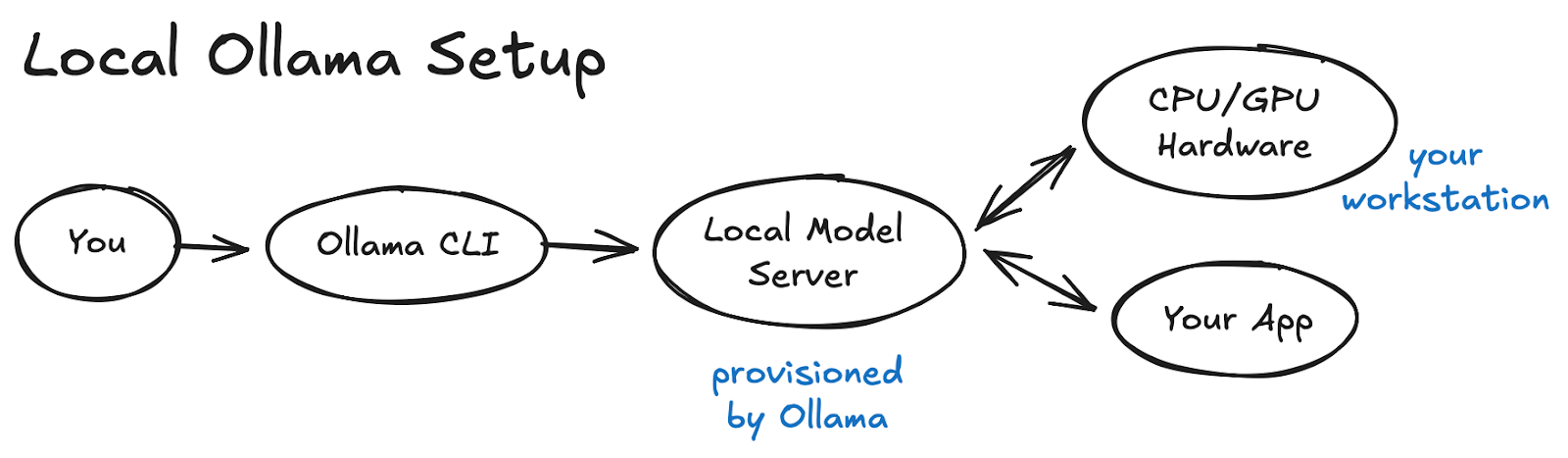

Local Ollama Setup

Local Ollama Setup

Here’s a guide from ngrok on how you can expose and secure your self-hosted Ollama API. This is hands-down the easiest way to experiment without touching infrastructure.

2. On a local GPU workstation

If you already own a dedicated GPU machine, like NVIDIA’s Jetson Nano, or plan on investing in one, it’s a no-brainer that you’d want to self-host your model over the same, as now you get to run mid- to large-size models pretty easily.

But… most laptops have GPUs these days, so why invest in a dedicated setup?

While most laptops today include GPUs, they’re built for bursty, user-facing workloads like rendering frames, gaming, or running short ML jobs; not the always-on, thermally constrained, sensor-heavy environments edge AI devices must handle. Jetson-class hardware trades raw power for efficiency and stability, offering dedicated ML acceleration, low-watt inference, and direct interfaces for cameras and GPIO pins. In practice, a dedicated GPU machine can deliver reliable, 24/7 on-device models where a laptop would simply start overheating.

You get an OpenAI-compatible API with more control than Ollama offers and higher throughput, leading up to a production-like-local workflow.

Text-Generation-Inference (TGI) from HuggingFace is a fantastic example to demonstrate the efficiency of a GPU node and here’s how you can get it up and running on an NVIDIA GPU.

3. Self-hosting in the cloud

Cloud LLM hosting comes in two flavors:

a. Cloud-managed inference (Example: AWS Bedrock)

With a service like AWS Bedrock, you don’t deal with the headache of managing the hardware or scaling. Just choose the model and endpoint, and the Cloud Service Provider of your liking handles the rest.

While you do get access to proprietary models and get enterprise compliance baked in, you lose control over runtime and freedom to bring your own models on board.

Find the guide on deploying a model to AWS Bedrock.

b. Roll-your-own cloud GPU server (EC2 + TGI/vLLM)

If you’re willing to own the scaling, updates, networking, and security of your nodes in return for complete control and long-term cost savings, running your own cloud GPU instances paired with an inference runtime like vLLM or TGI might work well for you.

Here’s a walkthrough on Serving LLMs using vLLM and Amazon EC2 instances with AWS AI chips.

4. Using on-prem servers or edge devices

If you need strict data control, offline operation, or you’re working in regulated or physically constrained environments, running LLMs on on-prem servers or edge devices like the NVIDIA Jetson, Intel NUC, or other accelerated edge inference hardware is a surprisingly strong option. This setup also gives you absolute ownership over where your models live and how your data flows, just at the cost of maintaining the hardware yourself. I personally am experimenting with Pamir AI’s Distiller for this!

5. Containerized deployment with Docker

If you’re looking for a setup that’s repeatable, portable, and friendly to automation, containerizing your LLM runtime is a very clean route. You package everything; from models, dependencies, to the serving layer, all into containers and you can run them anywhere.

Some common LLM container images you can experiment with are:

Containers shine when you like infrastructure predictability and you want to avoid “it works on my machine” moments. One thing worth noting though is that containers by default don’t have GPU access unless the host system explicitly exposes it to them.

6. Self-hosted, full AI stack platforms

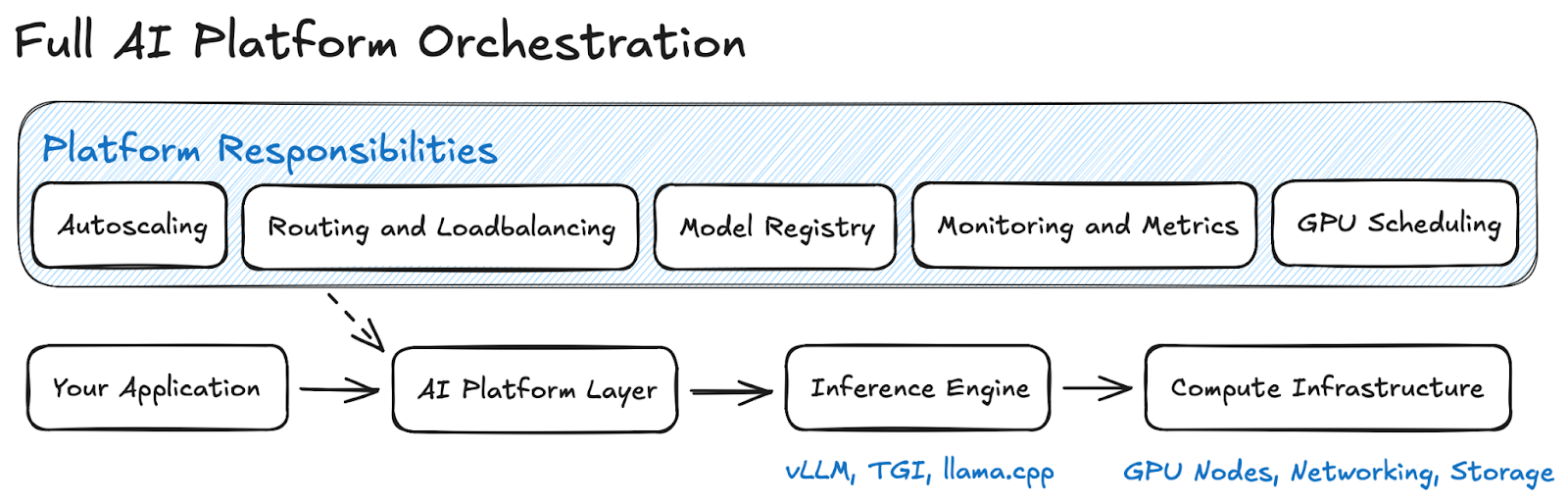

If you’re open to trading a bit of operational simplicity for a lot of acceleration and orchestration power, a self-hosted AI platform could be your sweet spot. These tools sit above your inference engine and handle the messy bits of running LLMs at scale, as can be visualized below:

Full Orchestration

Full Orchestration

Open WebUI is a great starting point and here’s how you can get cracking right away!

7. Running LLMs inside an app?!

If you want full offline capability, or you’re building apps where shipping a server isn’t an option, running the model directly inside your application can be ✨. Instead of hosting an API, the model becomes part of the app binary or runtime.

This includes embedding LLMs inside:

-

Desktop apps

-

Mobile apps

-

Backend binaries

-

Browsers

This approach gives you fully offline inference with no infrastructure; the only downside is that you may need to run a smaller model unless you're running on specialised hardware.

A great way to run this would be by using runtimes like MLC-LLM, WebLLM, or even llama.cpp compiled directly into your app binary, all of which make it very practical to ship a small, fast model straight inside your application without relying on any external server.

Which option is the best for your needs?

Choosing a self-hosting strategy is a lot like choosing where to live: sometimes a massive condo is perfect, and sometimes you just want to be left alone with a shed, and maybe a GPU. Here are some situations that may influence your pick:

Decision Framework

Decision Framework

Ready to get your model out in the wild?

Self-hosting an LLM isn't a single path. From “I want to run Llama 3 on my Mac” to “I need a multi-tenant GPU cluster with autoscaling,” there’s a setup that fits for everyone based on scale, budget, and comfort.

Aaishika S Bhattacharya

Developer Relations practitioner and part-time wanderer. Living at the intersection of community, code, and content; making complex tech feel approachable, observable, and human.